Voice and Identity

Source, filter, biometric

The Voice and Identity project (AHRC, 2015-2019) addressed the differences between auditory-acoustic and automatic voice analysis methods with the aim of improving forensic voice comparison; making the process more transparent, validated and replicable. The project had a number of strands relating to voice analysis and modelling, individual variation in speech, forensic voice analysis techniques, and the convergence of linguistic information with speech technology. See the project summary on GTR here.

I joined the project in 2018 and my contributions to the project relate to the question of individual variation in speech, particularly variation in supralaryngeal voice quality.

Supralaryngeal voice quality

When we talk about voice quality, we are often talking about laryngeal voice quality. Words such as "creaky", "breathy" and "hoarse" primarily describe what's going on with the vocal folds, rather than the vocal tract. Supralaryngeal voice quality, on the other hand, relates specifically to qualities of the voice due to structures of the vocal tract, and how a person habitually holds these articulators. This gives their voice an overall "quality" which is audible most of the time, but particularly pronounced for some sounds. For instance, the well-known characteristics of Sean Connery's voice occur because he habitually held his tongue slightly further back in the mouth than usual, giving his voice an audible character. This "character" in a voice is one tool that listeners use to identify who is talking.

In forensic speech science, one common method for quantifying voice quality is to use the vocal profile analysis (VPA) scheme first put forward by John Laver. Practitioners listen to a voice and assign a degree of severity to each of a list of vocal tract settings such as lip rounding or raised larynx. Users of the scheme are taught to identify each of these settings by listening alone.

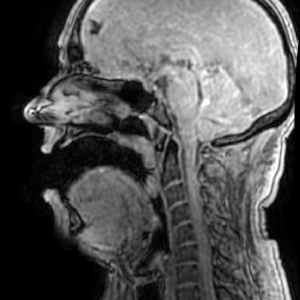

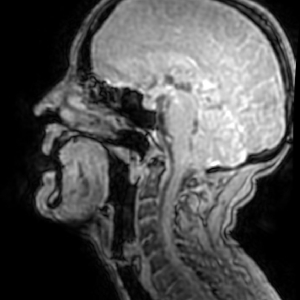

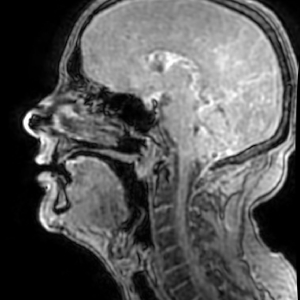

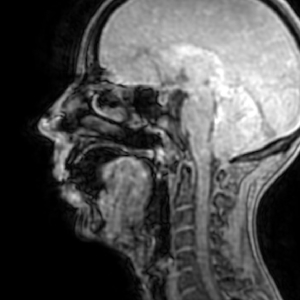

Using MRI, it is possible to look inside the vocal tract and start to address questions about supralaryngeal voice quality. First of all, are subjects doing what we think they are doing? For example, if they are producing what is considered a "raised larynx" voice, is their larynx actually raised compared to normal, and are any other articulators involved? Secondly, how do different degrees of articulator change correspond to the perceptually- defined degree of severity? For example, is there are linear relationship between the amount the larynx is raised in millimetres and the severity it is assigned in a VPA assessment? Finally, do different subjects realise each setting in the same way?

Answering these questions will provide critical and long-overdue information about how the vocal articulators are involved in producing different voice qualities, and how much this can vary between individuals. Doing so will add to our understanding of the VPA scheme and permit greater consistency and transparency in its use.

Data collection

For this project, we asked six highly-trained phoneticians to produce a number of different voice qualities in an MRI scanner. Such highly-trained subjects were necessary in order to ensure that a) each setting was being produced consistently (especially in the unusual conditions presented by an MRI scanner) and b) settings were being produced as independently from one another as possible. Following MRI capture, subjects repeated the experimental protocol in an anechoic chamber to obtain contemporaneous, high quality audio and laryngograph recordings of their speech.

Subjects were asked to produce a range of held vowels (permitting 3D vocal tract capture) and some running speech (permitting 2D real-time MRI capture; for more details about the MRI data capture please see this page) using nine different vocal tract settings: neutral, raised and lowered larynx, closed and open jaw, backed and fronted tongue, and lip rounding and lip spreading.

Findings

The data from this project are currently being analysed. Please check back for a summary of our findings.

See also...

Anatomy, acoustics and the individual

Using MRI to study the variation in vocal tract shape among the population.

Find out more

Data collection

Collecting vocal tract shape and articulation information using MRI, EMA and ultrasound.

Find out more

Speech Synthesis

Using numerical acoustic modelling with MRI data to synthesise speech sounds.

Find out more